AI is everywhere in marketing decks—but which parts actually help you ship better decisions, and which are just shiny slides? Here’s a pragmatic guide to separating signal from noise.

Quick answer: what is AI analytics?

AI analytics applies machine learning and natural-language techniques to collect, clean, analyze, and act on digital behavior data with minimal manual rules. If you’ve wondered what is ai analytics in plain terms: it’s software that learns patterns (not just follows if/then rules) to surface insights, predict outcomes, and trigger actions.

Where AI really helps today

1) Anomaly detection & data quality

- Use it for: Auto-catching tracking breaks, traffic spikes, tag misfires.

- Why it works: Models learn your normal seasonality and alert on true outliers.

- Prove it: Fewer bad decisions from dirty data; faster mean-time-to-detect issues.

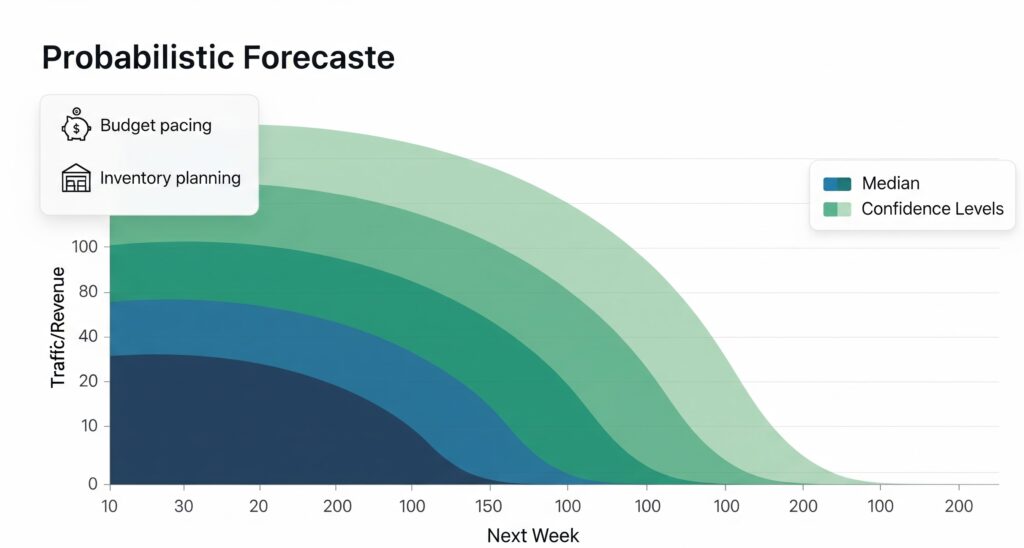

2) Forecasting that plans inventory & spend

- Use it for: Next-week traffic, conversions, revenue; budget pacing. For more on attribution, see our guide to attribution models.

- Why it works: Probabilistic forecasts beat simple moving averages.

- Prove it: Backtests with error metrics (MAPE, WAPE) and confidence intervals.

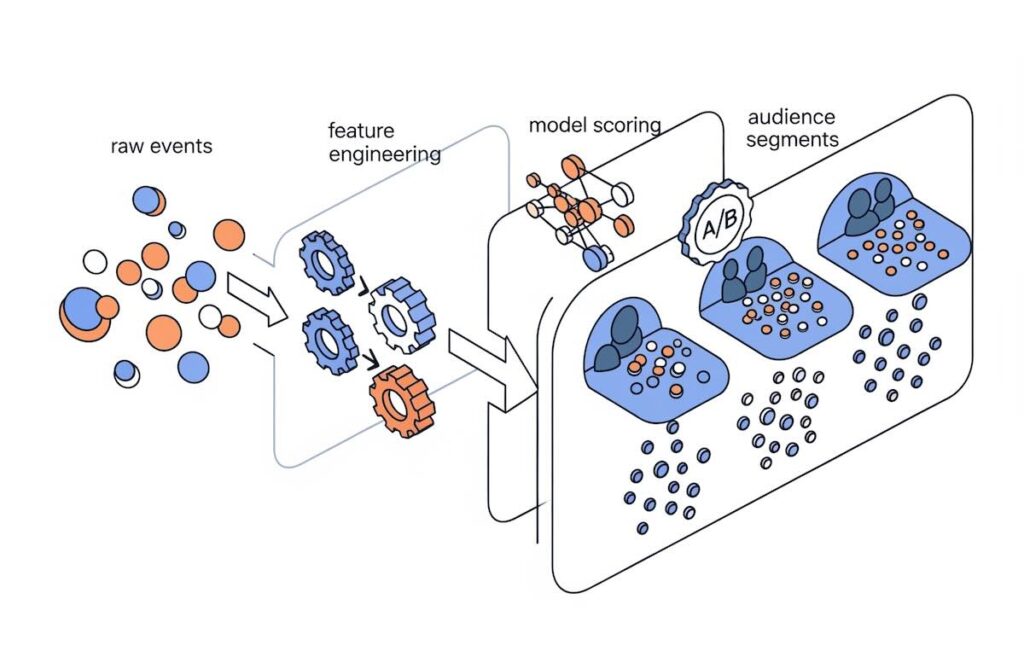

3) Propensity & LTV-based segmentation

- Use it for: “Who will convert/churn/upgrade?” audience building and bidding.

- Why it works: Learns feature combos humans miss (recency, content, source mix).

- Prove it: A/B lift on CPA/ROAS and incremental revenue, not just model AUC.

4) Content intelligence (NLP)

- Use it for: Topic clustering, intent mapping, search-to-page matching.

- Why it works: Transforms messy text (queries, site search, reviews) into themes.

- Prove it: Higher search CTR, better internal search satisfaction, time on relevant pages.

5) Journey stitching (privacy-safe)

- Use it for: Probabilistic identity within consented, first-party contexts.

- Why it works: Bayesian matching across devices/sessions.

- Prove it: Reduced duplicate users, clearer paths, improved attribution stability.

These wins typically come from ai-powered analytics embedded in your stack, not from generic dashboards with an “AI” label.

What’s mostly buzz (for now)

- “Autopilot optimization” promises with no guardrails or human review.

- Black-box “engagement scores” that don’t correlate with conversion or LTV.

- Chatbots that just rephrase charts—useful for access, not for strategy.

- Causal claims from clickstream alone. Without experiments or MMM, be skeptical.

- No backtesting. If a vendor won’t show out-of-sample results and error bars, pass.

The pros and cons of AI in marketing

Pros

- Scales insight discovery beyond human bandwidth

- Faster anomaly detection and triage

- More precise audiences and bidding

- Better forecasts for planning and cash flow

Cons

- Bias & leakage risks if features are sloppy

- Privacy & consent landmines; governance required

- Model drift and maintenance overhead

- “Overfitting to the past” when markets shift

If you need a talking point list, here are the pros and cons of ai in marketing you can defend to finance and legal.

How to choose ai powered data analytics solutions

- Start with one problem. “Lower CPA on paid search by 15%” beats “add AI.”

- Demand a proof-of-value plan. Baseline vs. model, test cells, success metric, timeline.

- Ask for a model card. Features used, training window, refresh cadence, known limits.

- Check MLOps & monitoring. Drift alerts, retraining policy, rollback path.

- Data protection. First-party only? PII handling, consent flows, data residency.

- Interoperability. Can you export features/scores? Does it write to your CDP/ads?

- Transparent pricing. Volume tiers, inference costs, hidden overages.

You’re not buying a slide; you’re buying measurable lift. Treat vendors as partners in an experiment.

A practical roadmap (making analytics and AI work together)

- Stage 0 — Tracking hygiene: Event taxonomy, consent, server-side collection.

- Stage 1 — Descriptive + QA: Dashboards + automated anomaly alerts.

- Stage 2 — Pilot ai-powered analytics: One use case (propensity, forecast, or anomaly).

- Stage 3 — Productionize: CI/CD for models, feature store, monitoring, documentation.

- Stage 4 — Scale & govern: Expand use cases, add approvals, periodic audits.

Metrics that actually matter for AI initiatives

- Business: Incremental revenue, CPA/ROAS lift, churn reduction, ARPU/LTV change.

- Causal evidence: Holdout or geo-A/B where feasible; otherwise CUPED, PS matching.

- Model health: Precision/recall, calibration, population & concept drift.

- Operations: Time-to-detect anomalies, false-alert rate, analyst hours saved.

Governance, ethics, and privacy (non-negotiable)

- Consent first: Prefer first-party collection; disclose automated decisioning where required.

- Data minimization: Use the fewest features needed; rotate sensitive ones.

- Fairness checks: Segment-level performance; avoid proxy discrimination.

- Reproducibility: Version data, code, and configs; keep an audit trail.

FAQ

Do I need data scientists to benefit?

Not always. Many ai powered data analytics solutions are turnkey. You still need an owner for data quality and testing.

Where does this leave my analysts?

They move up the stack—hypothesis design, experiment analysis, and storytelling—while machines handle rote detection and scoring.

Is this just for big companies?

No, but you must focus. One high-impact model in a mid-market stack can beat five half-baked ones in an enterprise suite.

Bottom line

AI won’t replace your analytics strategy; it amplifies it—if you anchor it to clean first-party data, solid experiments, and business metrics that pay the bills. Start small, measure honestly, and scale what proves real.